- Home

- About Us

- Work

- Journal

- Contact

- Shadow fight 2 apk download special edition

- Descent legends of the dark art

- Christmas onesie

- Mindomo crack

- Appily ever after rachel silverman

- Omnidisksweeper sierra

- Projared vagrant story

- Backbox 32m series elsewhere partnerswiggersventurebeat

- Blue angel aura quartz meaning

- Mediacenter seat

- Parasite eve faq

- Spiral of archimedes

- Beacon pines switch release date

- Sonos one speaker

- Javascript multibrowser lowerstring

- Home

- About Us

- Work

- Journal

- Contact

- Shadow fight 2 apk download special edition

- Descent legends of the dark art

- Christmas onesie

- Mindomo crack

- Appily ever after rachel silverman

- Omnidisksweeper sierra

- Projared vagrant story

- Backbox 32m series elsewhere partnerswiggersventurebeat

- Blue angel aura quartz meaning

- Mediacenter seat

- Parasite eve faq

- Spiral of archimedes

- Beacon pines switch release date

- Sonos one speaker

- Javascript multibrowser lowerstring

Our open source system requires no human interaction when assessing the compatibility risk of a proposed privacy intervention. We build a classifier, trained on a dataset generated by a combination of compatibility data from the EasyList project and novel browser instrumentation, and find it is accurate to practical levels (AUC 0.88). In this work, we design and implement the first automated system for predicting when a filter list rule breaks a website. To scale to the size and scope of the Web, filter list authors need an automated system to detect when a new filter rule breaks websites, before that breakage has a chance to make it to end users. blocking tools still break large numbers of sites). rules are tailored narrowly to reduce the risk of breaking things) and error-prone (i.e.

The inability of filter list authors to evaluate the Web compatibility impact of a new rule before shipping it severely reduces the benefits of filter-list-based content blocking: filter lists are both overly-conservative (i.e. This is a result of enormity of the Web, which prevents filter list authors from broadly understanding the impact of a new blocking rule before they ship it to millions of users.

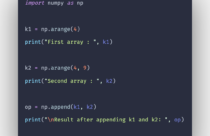

#Javascript multibrowser lowerstring code#

Our evaluations show that StyleX can improve the JavaScript code coverage achieved by a general-purpose crawler by up to 23\%.Ī core problem in the development and maintenance of crowd- sourced filter lists is that their maintainers cannot confidently predict whether (and where) a new filter list rule will break websites. Our actionable predictor models achieve 90.14\% precision and 87.76\% recall when considering the click event listener, and on average, 75.42\% precision and 77.76\% recall when considering the five most-frequent event types. In addition, our approach uses stylistic cues for ranking these actionable elements while exploring the app. Our approach, implemented in a tool called StyleX, employs machine learning models, trained on 700,000 web elements from 1,000 real-world websites, to predict actionable elements on a webpage a priori. To improve the efficacy of exploring the event space of web apps, we propose a browser-independent, instrumentation-free approach based on structural and visual stylistic cues. However, finding out which elements are actionable in web apps is not a trivial task.

We validate our approach on several open-source and industrial case studies to demonstrate its effectiveness and real-world relevance.Ī wide range of analysis and testing techniques targeting modern web apps rely on the automated exploration of their state space by firing events that mimic user interactions. Our approach consists of (1) automatically analyzing the given web application under different browser environ- ments and capturing the behavior as a finite-state machine (2) formally comparing the generated models for equivalence on a pairwise-basis and exposing any observed discrepan- cies. In this paper, we pose the problem of cross-browser compatibility testing of modern web applications as a 'func- tional consistency' check of web application behavior across different web browsers and present an automated solution for it. None of the current tools, which provide screenshots or emulation environments, specifies any notion of cross-browser compatibility, much less check it automat- ically. Although the problem is widely recognized among web developers, no systematic approach to tackle it exists today. With the advent of Web 2.0 applications and new browsers, the cross-browser compatibility issue is becoming increas- ingly important.